How News Sources Portray AI Regulation Policy

This chart shows how major news sources across the ideological spectrum frame ai regulation policy, from left to right-leaning perspectives.

Many of the media biases we observe, in both news coverage and political rhetoric, stem from the fundamental differences in policy stances held by the major political parties. One of such key points of conflict is Artificial Intelligence (AI) Regulation. As artificial intelligence technologies rapidly evolve and integrate into everyday life, the question of how they should be governed has emerged as a central and increasingly urgent policy debate in the United States. From concerns about misinformation and deepfakes to issues surrounding job displacement, data privacy, and national security, AI regulation sits at the intersection of innovation and risk.

Understanding the Democratic stance on AI regulation and the Republican stance on AI regulation is critical for voters, educators, researchers, and policymakers seeking to navigate this complex and evolving issue. While both parties acknowledge the transformative potential of AI, they diverge significantly in how they approach oversight, the role of government, and the balance between fostering innovation and protecting the public.

The Democratic Stance on AI Regulation

The Democratic stance on AI regulation is largely centered on the need for proactive government oversight to ensure that artificial intelligence technologies are developed and deployed responsibly. Democrats emphasize the importance of protecting consumers, safeguarding civil rights, and mitigating risks associated with misinformation, algorithmic bias, and data misuse. Their approach generally supports the creation of regulatory frameworks that promote transparency, accountability, and ethical standards in AI systems.

Democratic policymakers have advocated for stronger federal involvement in setting AI standards, particularly in areas such as data privacy, facial recognition, and automated decision-making. The Biden administration has taken steps toward this goal through initiatives like executive orders on AI safety and the development of guidelines for responsible AI use. These efforts aim to establish guardrails that reduce harm while still allowing innovation to continue.

In addition, Democrats often highlight the societal impacts of AI, including concerns about job displacement and economic inequality. Many support policies that invest in workforce retraining and education to help workers adapt to technological change. They also stress the importance of preventing discriminatory outcomes in AI systems, particularly in sectors like hiring, lending, healthcare, and criminal justice.

Public opinion data reflects strong support for regulation among Democratic voters, with surveys indicating that a majority favor increased government oversight of AI technologies to address risks related to privacy, misinformation, and bias. However, confidence in the government’s ability to effectively regulate AI remains more measured, with only 36% of Democrats expressing trust in current regulatory capacity, according to Pew Research. This underscores both the demand for oversight and the challenges associated with implementing effective AI governance.

Politicians Who Support AI Regulation

36% Democrats say they trust the U.S. to effectively regulate AI.

Joe Biden

“AI can help deal with some very difficult challenges like disease and climate change, but it also has to address the potential risks to our society, to our economy, to our national security.”

Charles E. Schumer

“Some experts predict that in just a few years the world could be wholly unrecognizable from the one we live in today. That is what AI is: world-altering.”

The Republican Stance on AI Regulation

The Republican stance on AI regulation generally emphasizes limited government intervention and a strong focus on fostering innovation and maintaining global competitiveness. Republicans tend to view artificial intelligence as a critical driver of economic growth, national security, and technological leadership, particularly relative to strategic competitors such as China. As a result, they are often cautious about imposing regulations that could slow development or place U.S. companies at a disadvantage.

Rather than broad federal mandates, Republicans typically support a more decentralized and market-driven approach to AI governance. This includes encouraging private sector leadership, voluntary standards, and industry-led best practices. Many Republican policymakers argue that innovation thrives in flexible environments and that premature or overly restrictive regulation could stifle technological progress and entrepreneurship.

Republicans have also highlighted concerns about regulatory overreach, particularly regarding content moderation, data use, and algorithmic transparency. Some argue that government involvement in these areas could lead to unintended consequences, including limitations on free speech or increased bureaucratic control over emerging technologies. This perspective aligns with the broader conservative emphasis on reducing federal oversight and preserving individual and corporate autonomy.

At the same time, Republicans do recognize certain risks associated with AI, particularly in areas such as national security, cybersecurity, and defense applications. There is bipartisan agreement on the need to address threats posed by adversarial uses of AI, including disinformation campaigns and military advancements. However, the Republican approach typically prioritizes targeted interventions in high-risk areas over comprehensive regulatory frameworks, reflecting a preference to balance risk mitigation with continued innovation. This perspective is also reflected in public opinion, as Republican voters tend to express relatively higher confidence in the government’s ability to manage AI risks, with 54% indicating trust in U.S. regulatory capacity according to Pew Research.

Politicians Who Oppose AI Regulation

54% Republicans say they trust the U.S. to effectively regulate AI.

Ted Cruz

“My view is if there’s going to be killer robots I’d rather they be American killer robots than Chinese.”

Kevin McCarthy

“Advancements in industries such as manufacturing, defense, energy, and artificial intelligence all have the power to propel our society forward.”

Political Polarization and Public Policy on AI Regulation in the United States

The political positions on AI regulation have become increasingly reflective of broader ideological divides in American governance. While both parties acknowledge the transformative impact of artificial intelligence, their approaches to managing its risks and opportunities differ significantly. The federal government’s stance on AI regulation continues to evolve, often shaped by shifts in political leadership, legislative priorities, and the pace of technological advancement.

At a high level, the divide can be summarized as follows:

- Democratic approach: Emphasis on comprehensive federal frameworks addressing data privacy, algorithmic accountability, and ethical AI use

- Republican approach: Preference for limited regulation, prioritizing innovation, economic competitiveness, and reduced federal intervention

This divergence has contributed to ongoing debates in Congress over how best to regulate emerging technologies without hindering growth.

Public opinion on AI regulation reflects a growing awareness of both its benefits and risks. Key concerns shaping the debate include:

- Misinformation and deepfakes

- Misuse of personal data and privacy risks

- Potential economic disruption and job displacement

These concerns have increased demand for oversight, particularly among Democratic voters. At the same time, many Americans express concern that excessive regulation could slow innovation or reduce the United States’ competitive edge in the global technology race.

Industry and expert perspectives also play a key role in shaping policy discussions. Stakeholders across sectors have called for balanced approaches that combine innovation with safeguards. These perspectives generally fall into two categories:

- Support for voluntary standards and industry-led guidelines

- Support for formal regulation to ensure accountability and consistency

This intersection of political ideology, public concern, and industry influence continues to define the evolving landscape of AI policy in the United States.

A Brief History of AI Regulation Policy in the U.S.

The history of AI regulation in the United States is relatively recent compared to other policy areas, reflecting the rapid emergence of artificial intelligence as a transformative technology. Early discussions around AI governance began in the 2010s, as machine learning systems became more widely adopted across industries such as finance, healthcare, and defense. Initial policy efforts were largely exploratory, focusing on research and innovation and on maintaining U.S. leadership in technological development rather than on imposing strict regulations.

During the late 2010s and early 2020s, concerns about the societal impact of AI grew. Issues such as algorithmic bias, data privacy, surveillance, and misinformation brought increased attention from lawmakers and regulators. Federal agencies and advisory bodies started publishing guidelines and reports on the ethical use of AI, while Congress held hearings to better understand the risks and opportunities associated with the technology.

A significant shift occurred in the early 2020s as AI systems became more powerful and publicly accessible. The rise of generative AI tools, capable of producing realistic text, images, and videos, intensified debates around deepfakes, intellectual property, and information integrity. In response, the Biden administration introduced executive actions to promote safe, secure, and trustworthy AI, marking one of the first coordinated federal efforts to establish guardrails for AI development.

At the state level, various jurisdictions began experimenting with their own approaches to AI-related issues, particularly in areas such as facial recognition and data protection. However, unlike more established policy areas, there is still no comprehensive federal law governing artificial intelligence. As of the mid-2020s, AI regulation in the United States remains a developing framework, shaped by ongoing technological advancements, policy experimentation, and growing public scrutiny.

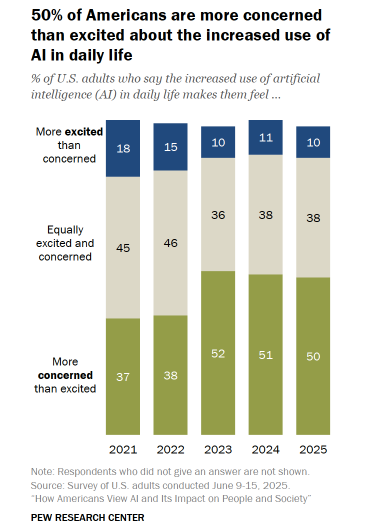

Half of U.S. adults say the increased use of AI in daily life makes them feel more concerned than excited, according to Pew Research.

Current Federal and International Policy on AI Regulation

The federal policy on AI regulation in the United States is still developing, with no single comprehensive law governing the use and deployment of artificial intelligence. Instead, current efforts consist of executive actions, agency-level guidelines, and proposed legislation aimed at addressing specific risks. The Biden administration has taken a leading role in shaping federal AI policy through executive orders focused on safety, transparency, and accountability. These initiatives emphasize responsible AI development, including requirements for testing advanced systems, improving data privacy protections, and reducing algorithmic bias.

Federal agencies have also begun integrating AI oversight into their respective domains. For example, agencies responsible for consumer protection, financial regulation, and national security are exploring how AI technologies impact their sectors. However, without unified legislation, regulatory authority remains fragmented, leading to ongoing debates about the need for a cohesive national framework.

At the state level, policies vary widely, with some states introducing restrictions on technologies like facial recognition and automated decision-making, while others prioritize innovation-friendly environments. This creates a patchwork of regulatory approaches, similar to other emerging technology sectors.

Internationally, AI regulation is evolving more rapidly in some regions. The European Union has taken a more comprehensive approach through its AI Act, which classifies AI systems based on risk levels and imposes corresponding regulatory requirements. This framework is often cited as one of the most advanced attempts to regulate AI globally.

Global organizations are also contributing to the policy landscape. The United Nations has emphasized the importance of ethical AI development and international cooperation, while the Organisation for Economic Co-operation and Development has established principles promoting trustworthy AI, including transparency, accountability, and human-centered values.

As countries take different approaches, the global policy environment for AI regulation is becoming increasingly complex. The United States continues to balance domestic priorities with international competitiveness, while also engaging in global discussions about setting standards for safe and ethical AI use.

What the Future Holds

The future of AI regulation policy in the United States will likely be shaped by the ongoing tension between innovation and oversight. As artificial intelligence continues to advance at a rapid pace, policymakers will face increasing pressure to establish clearer and more comprehensive regulatory frameworks. The debate between Democratic and Republican views on AI regulation reflects broader questions about the role of government, the limits of technological development, and the responsibility to protect individuals and society from potential harm.

One likely area of development is the introduction of more unified federal legislation. While current efforts rely heavily on executive actions and agency guidance, there is growing bipartisan recognition that a cohesive national strategy may be necessary to address complex challenges such as data privacy, algorithmic accountability, and the misuse of generative AI. However, disagreements over the scope and intensity of regulation will continue to influence how such policies are designed and implemented.

Technological advancements will also play a key role in shaping future policy. As AI systems become more capable, particularly in areas like autonomous decision-making and content generation, concerns about misinformation, security, and ethical use are expected to intensify. These developments may drive stronger calls for oversight, especially if high-profile incidents highlight the risks associated with unregulated AI deployment.

At the same time, global competition will remain a major factor. Policymakers will need to balance domestic regulatory goals with the need to maintain U.S. leadership in artificial intelligence. This includes navigating international standards and collaborating with allies while competing with other major powers in the development of advanced technologies.

Ultimately, the future of AI regulation will depend on how effectively policymakers can strike a balance between fostering innovation and ensuring accountability. As public awareness grows and political debate continues, AI regulation is likely to remain a central issue in American policy discussions, influencing not only technology governance but also broader conversations around economic growth, security, and civil liberties.